The privacy tradeoff no one talks about in AI finance tools

When you connect your bank to an AI assistant, what actually happens to that data? We broke down how five popular tool patterns work.

The Tablewealth Team

May 1, 2026·9 min read·Privacy

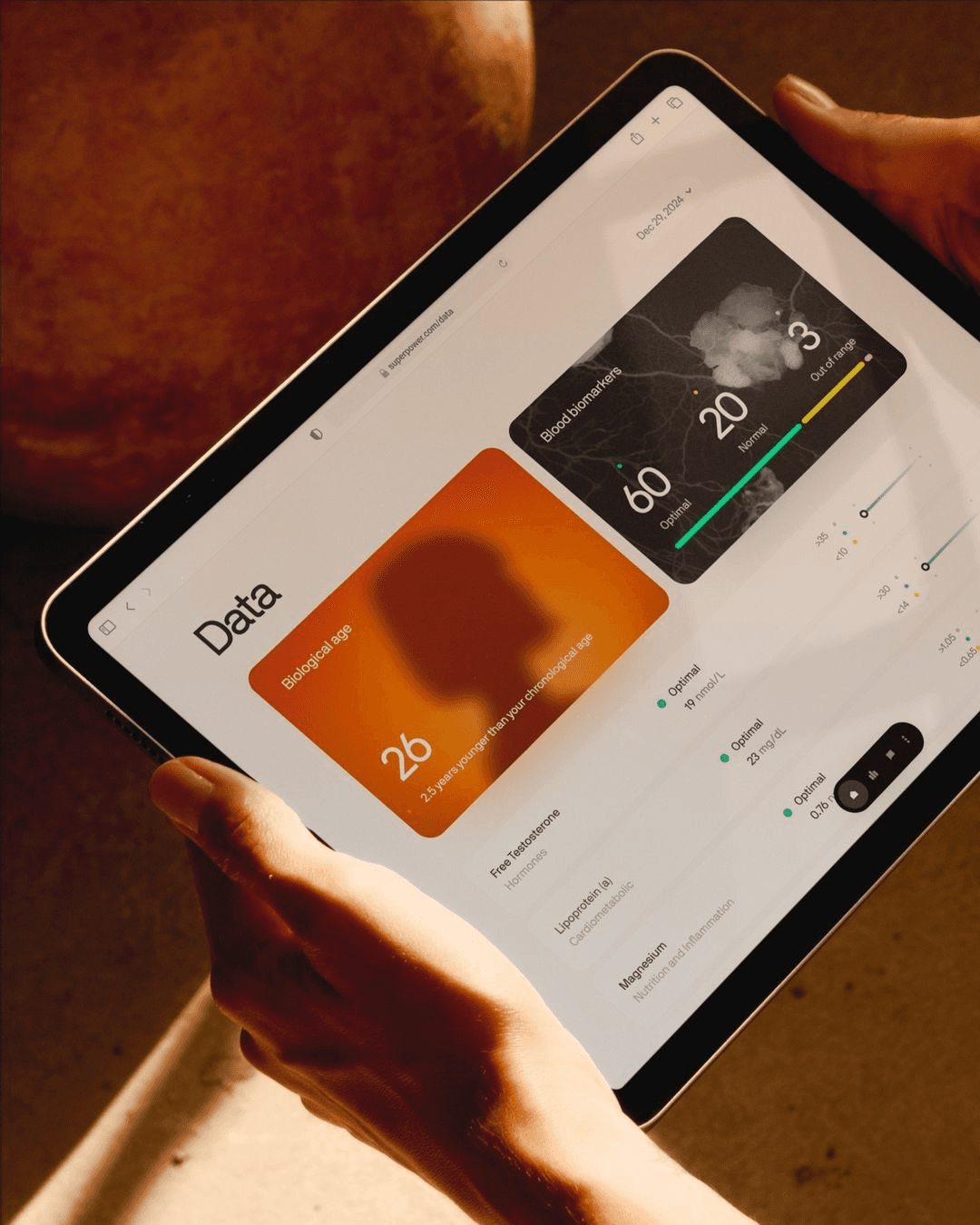

AI finance tools are often evaluated by how clever their answers sound. A better first question is quieter: what data did the system need to see in order to answer at all?

When an assistant receives balances, merchants, paychecks, holdings, and timing patterns, it is not just reading numbers. It is reading a behavioral fingerprint. That fingerprint can reveal where you live, how you work, what you worry about, and who shares financial responsibility with you.

Most finance apps ask users to accept a simple trade: give the product broad access to sensitive account data, and the product will return budgeting insights, recommendations, or automation. AI makes that bargain feel more powerful, but also expands the surface area where data can travel.

Data minimization still matters

The safest data flow is the one that never happens. If a model can build an interface from layout preferences and data shapes, it does not need the underlying account records. That is the design line Tablewealth tries to preserve.

"Useful financial software should not make exposure feel inevitable."

Practical tests for any finance tool

- Can the product explain which systems receive raw account data?

- Can you revoke access without losing your whole workspace?

- Does the AI layer receive records, summaries, schemas, or only interface preferences?

- Are provider errors normalized before they reach the main dashboard UI?

A better default

The default should be scoped access, clear boundaries, and user-owned outputs. Privacy should not be a pro feature or a buried setting. It should be part of the architecture.